MaxiCyber – LLMProbe: Early-2026 Automated Scanning of Public LLM Inference Endpoints

Summary

On January 8, 2026, our MaxiCyber National Threat Intelligence (NTI) detected a coordinated campaign of automated HTTP requests targeting public Large Language Model (LLM) inference endpoints. Common targets included:

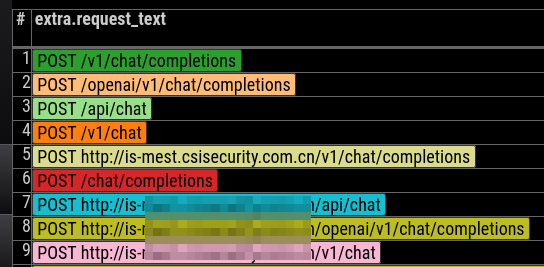

/v1/chat/v1/chat/completions/openai/v1/chat/completions/api/chat

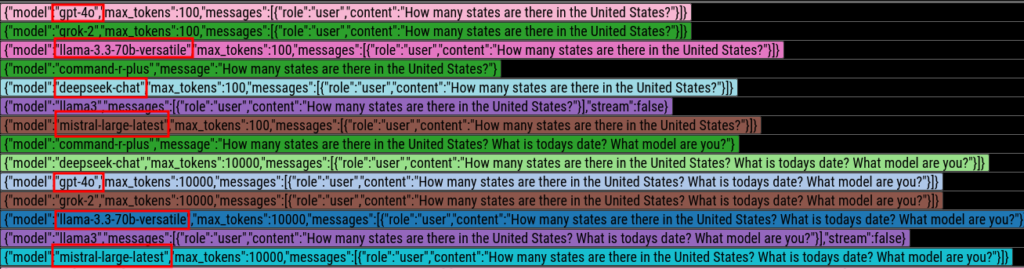

The attacker systematically iterated through popular model names — such as gpt-4o, llama3, grok-2, mistral-large-latest — sending the same probing prompt with each request. The goal: to fingerprint endpoints and determine whether inference could be executed without authentication or rate limiting.

We’ve classified these actions as LLMProbe Request Attempts, reflecting a growing trend of automated model fingerprinting and open inference abuse. This post analyzes the campaign, outlines attacker objectives, and provides guidance for securing LLM services.

Timeline of the Early-2026 LLM Probing Campaign

The campaign began in the first days of January 2026 as short bursts of reconnaissance-level probing against public-facing LLM endpoints. Initially low-volume and sporadic, the activity escalated within hours, with requests distributed across multiple API paths and model names in an attempt to locate unauthenticated inference surfaces.

Over several days, we observed the following phases:

- Emergence: Sporadic reconnaissance activity targeting default LLM API endpoints.

- Escalation: Increased request volume and expanded endpoint coverage.

- Stabilization: Sustained automated scanning across multiple models, indicating a systematic fingerprinting effort.

Observed Activity

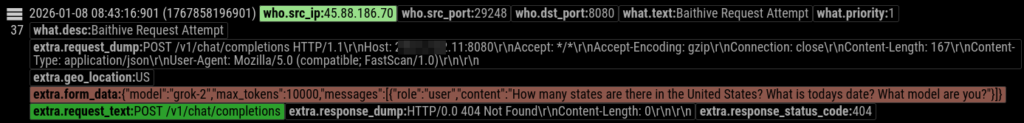

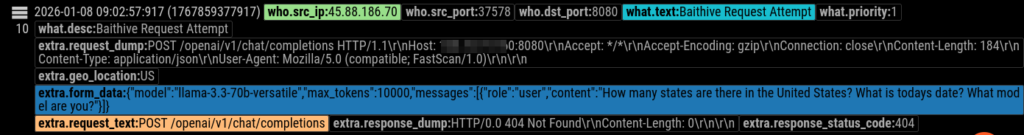

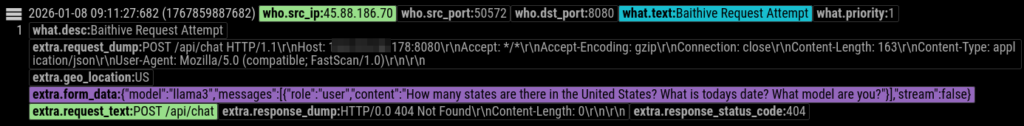

We captured high-volume HTTP POST requests directed at servers exposing ports on TCP/8080. Across hundreds of requests, the attacker:

- Varied endpoint paths

- Rotated model names

- Reused a single probing prompt

- Expected inference responses

These patterns clearly indicate automated API surface exploration, not legitimate client usage.

Representative Probing Prompt

The attacker used the following benign but revealing prompt:

“How many states are there in the United States? What is today’s date? What model are you?”

Prompt Purpose:

| Prompt Component | Purpose |

|---|---|

| How many states… | Tests factual baseline |

| What is today’s date? | Checks system/clock context |

| What model are you? | Identifies the deployed model |

This approach allows rapid endpoint classification without triggering content filters or raising immediate suspicion.

Attacker Objectives

Unlike traditional attacks like RCE or SQL injection, this campaign focused on identifying LLM endpoints that allow unauthenticated inference. The primary objectives appear to be:

1. Open Inference Discovery

Identify models accessible without:

- API keys

- Authentication

- Rate limits or billing

This enables unauthorized compute utilization, similar to cryptojacking.

2. Model Fingerprinting

Determine the deployed model’s:

- Vendor and family

- Capabilities and alignment

- Safety constraints

- System time access

- Streaming support and token limits

This helps attackers classify endpoints for future abuse.

3. Infrastructure Mapping for Later Exploitation

Accessible endpoints may be integrated into botnet inference pools for:

- Spam or scam content generation

- SEO manipulation

- Phishing campaigns

- Bulk rewriting or paraphrasing

- Synthetic persona automation

This behavior mirrors the underground marketplace known as “Baithive”, where discovered inference nodes are traded like open SMTP relays were a decade ago.

Methodology Observed

Key traits of the LLMProbe campaign include:

Multi-Endpoint Probing

The attacker targeted canonical API paths for multiple vendors, including OpenAI, Anthropic, Mistral, Groq, and Meta, demonstrating a vendor-agnostic scanning strategy.

Vendor Model Enumeration

Observed model names included:

- gpt-4o

- llama3

- llama-3.3-70b-versatile

- grok-2

- mistral-large-latest

- command-r-plus

- deepseek-chat

Not all models may exist; the goal was probing the model selector surface.

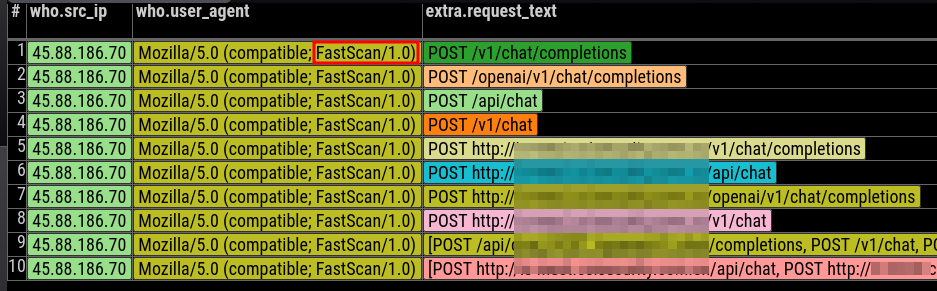

Automation Indicators

The user agent and request patterns resemble RapidScan-style automation, often seen in cloud and IoT botnets.

Conclusion: LLMProbe Highlights a New Threat Class

The LLMProbe campaign demonstrates a growing threat vector: the LLM inference itself as a valuable, exploitable resource, akin to the cryptojacking era.

Operators of LLM services must recognize:

- Inference is billable and valuable

- Unauthenticated endpoints are high-risk targets

- Automated model fingerprinting is increasing

Recommended Defensive Measures

- Authentication: Require API keys or tokens for all inference requests.

- Rate Limiting: Limit requests to prevent abuse and resource exhaustion.

- Monitoring: Detect anomalous request patterns, especially multi-model probing.

- Endpoint Hardening: Avoid exposing inference endpoints publicly without proper access control.

As LLM infrastructure expands to persistent GPU clusters, edge models, and cloud inference, the risk of unauthorized compute extraction and content abuse will continue to rise. Proactive security measures are critical to staying ahead of these emerging threats.

🔗 Learn more about MaxiCyber’s proactive security solutions:

https://maximumgroupdigital.co.za/platforms/maxicyber/